I used to enjoy AI a lot, and I still think the technology is really cool, but lately I’m beginning to despise it. It spreads and nestles itself into every corner of our life, and it rots whatever it touches, be it the humans that rely on it or the projects in which it’s used. I see so many open source projects that are tainted with it, it’s almost impossible to avoid it. It’s sad. The generations that will grow up with AI will be fucked.

The generations that will grow up with AI will be fucked.

Eh. That’s something every single generation before us in at least the past 150 years has been saying about other new society-changing stuff. They’ll be fine, society just changes.

Generations that will grow up with social media will be fucked.

Generations that will grow up with internet will be fucked.

Generations that will grow up with video games will be fucked.

Generations that will grow up with computers will be fucked.

Generations that will grow up with morning-after pills will be fucked.

…

…

Yeah, sorry but I have to disagree with you pretty hard there. Generations that grew up with social media, internet, video games, … they are fucked. We’ve been watching the fuckening for a long time now. Saying that they haven’t been fucked is reminiscent of my grandparents saying ADHD and Anxiety aren’t real.

Are they really more fucked than generations who didn’t have access to social media, internet, and video games? It seems to me that you are biased by the negative effects these had, and ignoring the positive ones.

Saying that they haven’t been fucked is reminiscent of my grandparents saying ADHD and Anxiety aren’t real.

How is that in any way comparable? I’m not saying the downsides of social media, internet, video games are not real, I’m saying “People growing up with X will be fucked” is a saying that every generation has been saying, ignoring the positive impacts. This is a cognitive bias in the likes of the rosy retrospection.

Relevant section:

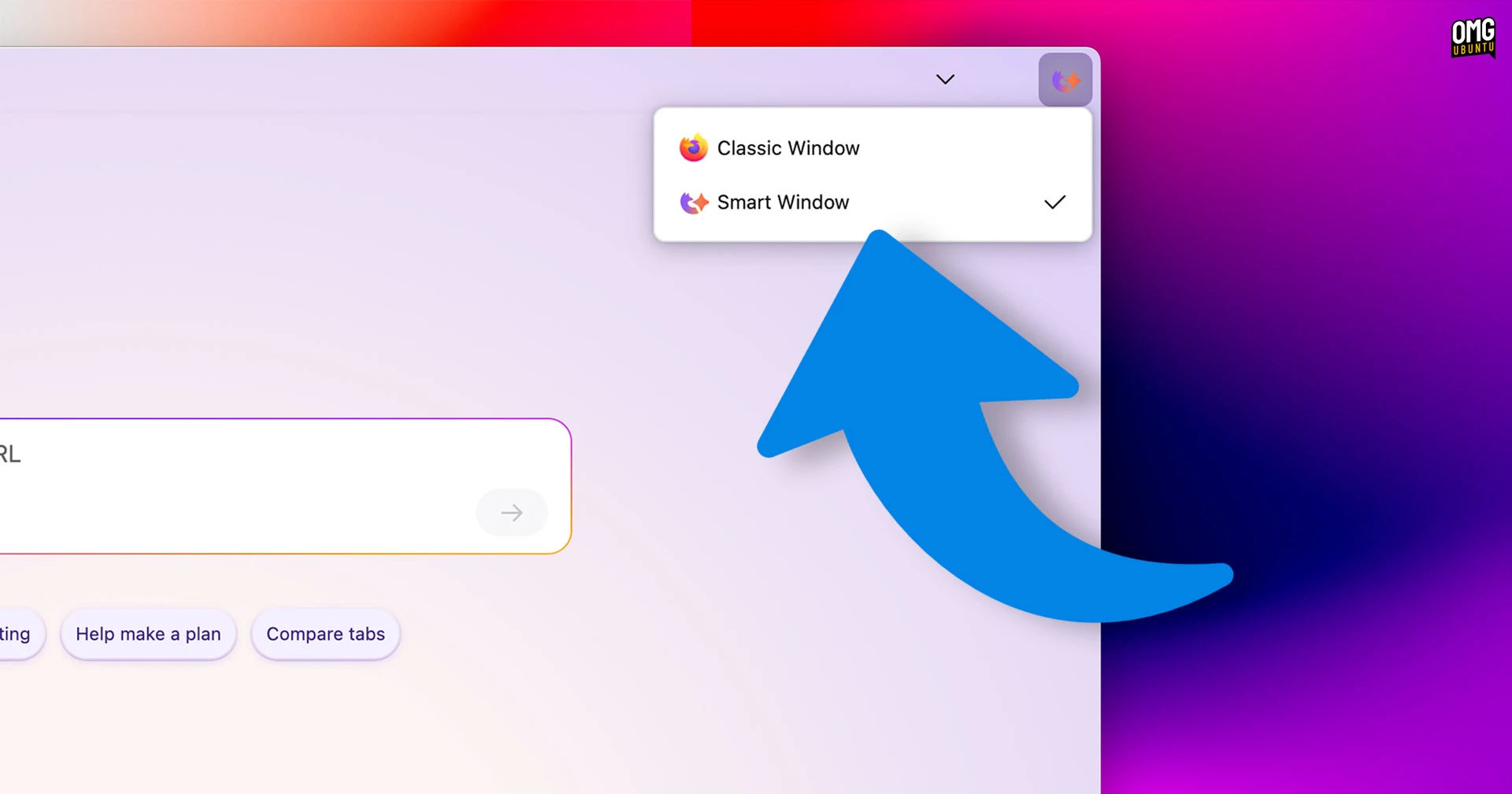

Smart Window uses ‘memories’, things Mozilla says “…it learns from your activity” to inform its responses.

You can delete memories individually, and you can set any given chat session to not use/store them.

Fine so far.

The problem? My memory list isn’t populated with things Smart Window learned since I enabled it. Oh no.

It has activity going back months. We’re talking searches and website interactions from long before I enabled this. features.

Firefox just handed that history to the AI models to plough from, without telling me upfront.

I found this the creepiest aspect of Smart Window.

Mozilla says this was a flub; it will refine the onboarding around Smart Window to limit memory formation to post-opt-in activity only. That’s obviously the right fix.

Because sharing a user’s prior browsing history with third-party AI models, silently, on feature activation, without any headset? Yeah, a bit icky – but that’s the price of testing features that are finished, I guess.

There’s also an option to bring your own LLM, with fields for model name, endpoint, and API token available for entry when the manual option is enabled. However, the page itself warns local models may not work correctly.

It looks like there’s an option for people to self-host too. You won’t have to send your history to someone else’s computer.

If it’s anything like how they handled the AI sidebar, this option is going to get hidden before it hits production.

It would be really cool if they didn’t do that this time.